Last year, a retail banking team ran a pilot we've seen repeated across dozens of organizations. They invested heavily in AI call simulations, and their reps crushed it: crisp openers, clean objection handling, discovery questions fired in textbook sequence. Then those same reps walked onto the branch floor and fell apart. Customers who wandered in with vague questions got steamrolled by scripted pitches. Reps who could handle a 90-second phone objection couldn't manage 10 seconds of silence from a couple exchanging glances across a desk. Practice scores were high. Conversion rates in live meetings stayed flat.

The gap wasn't effort or talent. It was fidelity. The training didn't match the job.

This pattern should worry any L&D leader or sales director running face-to-face teams on phone-based roleplay. The better your reps get at simulated calls, the more confident they feel walking into rooms they're not actually prepared for. Below is a diagnosis of why that gap exists, what it costs, and a concrete transition plan you can bring to your next team meeting.

What Makes Face-to-Face Sales Conversations Harder to Train

Phone calls have a built-in structure. Someone dials, someone answers, and both parties know the format. Face-to-face selling lacks that scaffold. A customer might walk into a showroom mid-conversation with their partner. A prospect at a property showing might start with small talk about the neighborhood and never signal when "the meeting" has begun.

The first two minutes of an in-person interaction are often ambiguous and uncomfortable. Reps trained exclusively through call simulations tend to default to aggressive scripted openers that feel robotic in a live setting. They treat silence as dead air to fill rather than space where the customer is thinking. They miss the physical cues (a crossed arm, a glance toward the door, a partner leaning in) that experienced field reps read instinctively.

Phone selling rewards verbal precision. Face-to-face selling rewards situational judgment. These are different skills, and training one does not build the other.

Why Reps Trained on Call Simulations Struggle in Live Meetings

Call simulations reward message recall and linear talk-track execution. That works well for inside sales, SDR outreach, and support-to-sales handoffs. But live meetings rarely follow a predictable structure, and the skills that earn high scores in phone practice can backfire on a showroom floor or across a desk.

Three failure modes show up repeatedly:

- Misreading silence as disengagement. On a phone call, 5 seconds of silence is awkward. In a face-to-face meeting, 5 seconds of silence often means the customer is processing, consulting a partner nonverbally, or building toward a question. Reps trained on calls rush to fill the gap, frequently derailing a moment where the buyer was about to move forward.

- Skipping or compressing discovery. Phone scripts treat discovery as a discrete phase: ask three questions, then transition. In a live meeting, discovery happens throughout the conversation and often through observation, not questions. A customer picking up a product, lingering in one room of a property, or returning to the same brochure page is giving you information. Call-trained reps miss it because they're listening for verbal signals only.

- Freezing on unscripted moments. A customer jumps straight to pricing. A partner raises an objection the rep has never heard. A child starts crying and the meeting pauses for two minutes. These moments are routine in field sales and nearly absent from phone simulations. Reps who have never practised recovering from an interruption or redirecting after a tangent have no muscle memory to draw on.

The popular approach that makes this worse: Many teams use call recording tools like Gong or Chorus to train field reps, pulling "best call" examples for coaching sessions. The logic seems sound: learn from what works. But the best phone calls and the best in-person meetings look nothing alike. A top-performing call is tight, structured, and verbally efficient. A top-performing face-to-face meeting often looks loose, patient, and full of pauses that would tank a call score. Training field reps on call recordings reinforces exactly the behaviors that underperform in the room.

How In-Person AI Roleplay Actually Differs

The distinction goes beyond the label on the scenario. It is what the practice session forces the rep to handle.

Genuine in-person AI roleplay frames scenarios around physical settings: a retail floor where a customer is browsing, a branch office where a couple arrives for a mortgage consultation, a property showing where the buyer's agent is present. The rep must manage contextual cues and conversational flow that mirror real meetings rather than follow a clean sequence of opening, discovery, objection, and close.

What changes in practice:

- Unstructured openings replace scripted intros. The rep must read whether the customer wants to talk, browse, or be acknowledged and left alone.

- Interruptions and tangents are built into scenarios. The AI buyer changes the subject, asks something unexpected, or pauses mid-sentence.

- Pacing becomes a scoreable skill. Did the rep rush? Did they let a silence breathe? Did they match the energy of a hesitant buyer or bulldoze through?

- Scoring shifts from talk-track adherence to situational judgment. The rubric evaluates whether the rep made good decisions in ambiguous moments, not whether they said the right words in the right order.

That higher-fidelity practice builds the skill that separates competent field reps from top performers: the ability to adapt in real time when the conversation doesn't follow the script.

When to Make the Switch (And How to Know You're Late)

If the majority of your revenue-generating conversations take place in a shared physical space (retail, financial advising, real estate, automotive, or field sales), the transition is overdue.

The clearest signal is a gap between practice performance and live meeting performance. If reps score well in roleplay but managers consistently observe underperformance in client meetings, the training format lacks fidelity to the actual selling environment. That disconnect is not a coaching problem. It is a design problem.

Prioritise the transition for roles where:

- The opening minutes of a conversation are unstructured and customer-led

- Body language and physical cues carry as much information as words

- Conversational pacing directly influences whether a customer stays or disengages

- More than one decision-maker is frequently present in the room

If three or more of those conditions apply, phone-based roleplay is building the wrong habits.

A 3-Step Transition Plan You Can Start This Week

You do not need to rip out your existing training stack overnight. But you do need to start realigning practice with reality.

Step 1: Audit Which Roles Are Truly Face-to-Face

Not every rep on your team sells in person. Map your roles into three buckets:

Most teams skip this step and train everyone the same way. That is how you end up with showroom reps practising cold call openers.

Step 2: Redesign Scenarios Around Specific Physical Environments

Generic scenarios ("a customer has an objection about price") produce generic skills. Effective in-person scenarios are rooted in a place and a situation:

- "A couple walks into the branch. One partner is enthusiastic; the other is checking their phone. You have 30 seconds before they reach the counter."

- "You're midway through a property tour when the buyer's agent asks a question that contradicts what you've said about the neighbourhood."

- "A customer on the retail floor has been browsing for 8 minutes without making eye contact. Another customer just walked in and is looking directly at you."

Build 5 to 7 scenarios that reflect the actual environments and situations your reps encounter most frequently. Interview your top-performing field reps to source them. They will tell you the moments that matter.

Step 3: Shift Scoring from Script Adherence to Situational Judgment

This is where most transitions stall. Teams switch to in-person scenarios but keep scoring reps on whether they hit every talk-track checkpoint. That defeats the purpose.

A scoring rubric for in-person roleplay should evaluate:

Share this rubric with managers before rolling out new scenarios. If coaches still score on talk-track compliance, reps will optimise for the wrong behaviours regardless of how good the scenarios are.

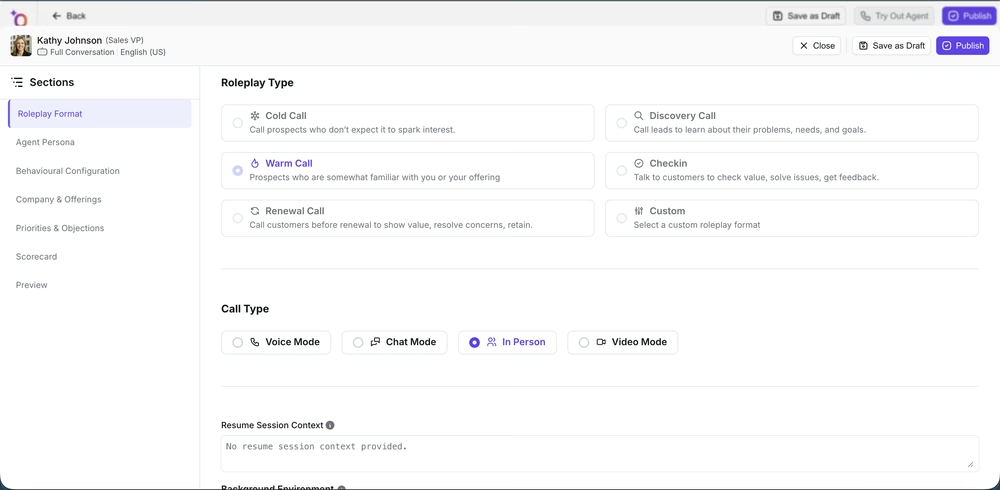

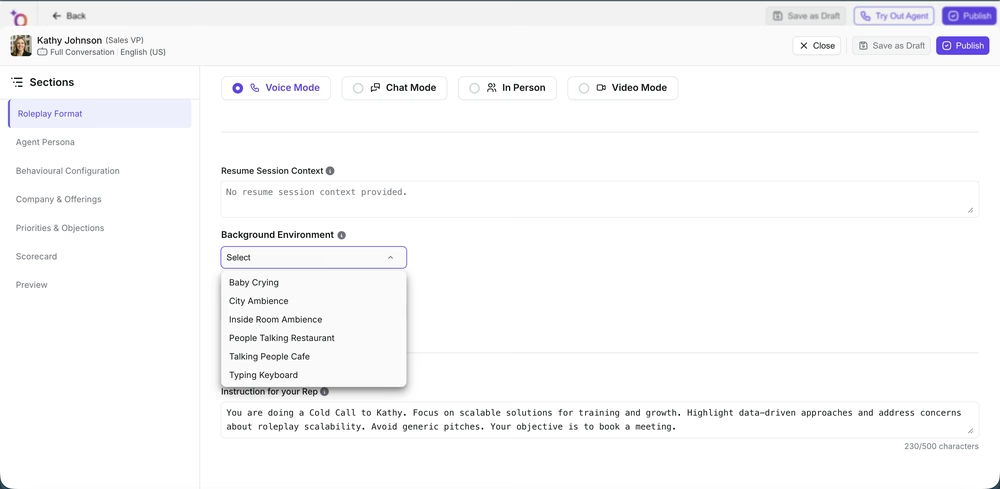

Choosing a Platform That Actually Fits

Most AI roleplay tools were built for phone and video sales, then relabelled for "in-person" use. Knowing the difference saves you a wasted procurement cycle.

Non-negotiable capabilities:

- Scenario customisation tied to physical environments, not just "industry templates." You need to set up a retail counter, a branch office, a property tour, and have the AI buyer behave differently in each.

- Scoring that measures pacing, listening, and adaptive responses, not just keyword matching or talk-track completion.

- Coaching data tied to specific scenario moments. Managers need to see "the rep rushed past the hesitation at 1:42," not just "the rep scored 72% overall."

Red flags that a platform is just repurposed phone simulation:

- Every scenario follows the same open-discover-object-close sequence regardless of setting

- Scoring weights verbal output over timing, pauses, and adaptiveness

- The "in-person" mode is identical to the phone mode with a different background image

Outdoo is purpose-built for in-person selling environments and focuses on the conversational dynamics that define face-to-face meetings: unstructured openings, nonverbal responsiveness, and situational pacing. For teams where the room is the selling environment, it is worth a serious evaluation alongside whatever you are currently using.

Conclusion

Phone-based roleplay is excellent training for inside sales teams. The problem is that organisations apply it universally, including to roles where the selling environment looks, sounds, and feels nothing like a phone call. Every week that field reps practise on call simulations, they build confidence in skills that do not transfer to the room where the deal actually happens.

For L&D leaders and sales directors running retail, real estate, financial advising, or field sales teams, in-person AI roleplay is the stronger choice because it trains the skill that actually drives close rates: situational judgment under ambiguity. If you're evaluating platforms, Outdoo is built specifically for this. The teams that close the fidelity gap first won't just see better conversion numbers. They'll stop losing the deals they never knew they were losing: the ones where the customer smiled, said "we'll think about it," and never came back.

Frequently Asked Questions

Face-to-face selling requires reps to read body language, manage silence, and navigate unstructured openings that do not exist on phone calls. The first two minutes of an in-person meeting are often ambiguous, with no clear signal for when "the meeting" has started. Reps trained exclusively through call simulations tend to default to aggressive scripted openers that feel robotic in a live setting. Training for these situations demands practice environments that replicate the pace, pauses, and unpredictability of a real meeting room. A practical test: if your reps sound identical in practice and in live meetings, they are probably undertrained for the moments that actually matter.

In-person AI roleplay frames scenarios around physical settings like retail floors, property showings, or branch offices, so the rep must handle contextual cues and conversational flow that mirror real meetings. Rather than following a clean sequence of opening, discovery, objection, and close, the rep practices managing interruptions, reading hesitation, and adjusting pace in real time. Scoring shifts accordingly: situational judgment, pacing, and adaptive responses carry more weight than keyword accuracy or talk-track completion.

Call simulations reward message recall and linear talk-track execution, but live meetings rarely follow a predictable structure. Reps trained only on calls often misread customer silence as disengagement, rush through discovery, or miss nonverbal signals that indicate a buyer is ready to close. The skill gap shows up most in unscripted moments: a customer jumps straight to pricing, a partner raises an unexpected concern, or the conversation pauses for two minutes while a child is settled. Without practised responses for these situations, reps default to restarting their script, which erodes trust in the room.

The switch should happen whenever the majority of revenue-generating conversations take place in a shared physical space: retail, financial advising, real estate, or field sales. If managers consistently observe that reps perform well in practice but underperform in live client meetings, the training format lacks fidelity to the actual selling environment. Prioritise the transition for roles where opening minutes, body language, and conversational pacing directly influence close rates. For hybrid roles, weight training toward whichever format drives the most revenue rather than defaulting to phone simulations because they are easier to deploy.

Start by evaluating whether the platform can simulate in-person scenarios with realistic, environment-specific context rather than repurposing phone call scripts with a different label. You need the ability to customise settings like a retail counter, a branch office, or a property tour so reps practise the exact situations they encounter. Scoring should measure situational judgment (pacing, listening, adaptive responses) rather than just talk-track adherence. Look for coaching data tied to specific scenario moments so managers can pinpoint where a rep rushed, missed a cue, or recovered well. Outdoo is built specifically for these in-person dynamics and worth evaluating if your team's revenue depends on what happens in the room, not on the phone.

.svg)