A 95% certification pass rate should signal a well-trained team. So why does it so often coexist with stagnant win rates, recurring compliance flags, and QA scores that tell a completely different story?

The gap between "certified" and "actually ready" is one of the most expensive blind spots in customer-facing operations. Most L&D leaders already sense it. They just lack a framework for diagnosing the problem and a clear picture of what the alternative looks like in practice.

The pattern we see repeatedly across enablement teams: organizations that replace quiz-based certification with scenario-based readiness assessments start seeing measurable improvements in ramp time and QA scores within the first quarter. Completion-rate-only programs show no reliable correlation with downstream performance metrics at all. Kirkpatrick Level 2 is where most organizations stop, and that is precisely the problem. Recall is not readiness.

The Completion Trap: Why Passing a Course Is Not the Same as Being Ready

The logic behind completion-based certification feels airtight on paper. Design a training module. Include a knowledge check. If the rep passes, they are certified. Move on.

This model measures exposure, not ability. It confirms someone consumed content and could recall enough of it to clear a multiple-choice threshold. It says nothing about whether that person can hold their ground when a buyer raises an unexpected objection, navigate a compliance-sensitive disclosure without stumbling, or adapt a pitch when the conversation veers off script.

In practice, the pattern looks like this: teams that invest heavily in onboarding and certification report high completion rates, but frontline managers still spend disproportionate time coaching the same skill gaps. New hires who "passed" certification freeze on their first live call. Tenured reps who aced a refresher course continue making the same errors in deal negotiations. The certification data says the team is trained. Every other signal says otherwise.

A 200-person BPO we observed went through exactly this cycle. Their onboarding certification pass rate hovered above 90%. Yet attrition in the first 60 days remained stubbornly high, and QA scores for newly certified agents consistently lagged tenured staff by 30 or more points. The certification was not wrong. It was simply answering the wrong question: "Did they finish?" instead of "Can they perform?"

This is not a minor calibration issue. It is a structural flaw. Completion-based certifications test the wrong thing, and then organizations make staffing, go-live, and compliance decisions based on that flawed signal.

What Traditional Certifications Actually Measure (and What They Miss)

Traditional certifications do a few things well and miss everything that actually predicts job performance.

What they capture well:

- Whether a rep completed the assigned modules

- Whether they can recall product specs, policy language, or process steps

- Whether they cleared a minimum knowledge threshold

What they miss entirely:

- Judgment under pressure: Can the rep choose the right response when a prospect pushes back in a way the training did not explicitly cover?

- Adaptive communication: Can they adjust tone, pacing, and messaging based on real-time signals from the buyer?

- Skill transfer: Can they apply a framework taught in training to a novel scenario they have never rehearsed?

One rep might handle a pricing objection with composure, reframe the conversation around value, and advance the deal. The other might default to discounting or freeze entirely. The certification treats them as equivalently prepared. The pipeline does not.

This gap is especially dangerous in high-stakes roles. Consider a rep handling HIPAA-regulated conversations, navigating fair housing disclosures, or managing a complex enterprise renewal. In these contexts, false preparedness is not just a coaching problem. It is a business risk with legal, financial, and reputational consequences.

What Verified Readiness Actually Looks Like

If completion tells you someone consumed content, readiness tells you they can perform. But "readiness" as a concept only works if you can define it precisely enough to measure.

Verified readiness means a person has demonstrated, under conditions that approximate real job pressure, that they can execute the skills their role requires. Not that they know the right answer in theory. That they can produce it in context, under time pressure, with the ambiguity and friction that real conversations involve.

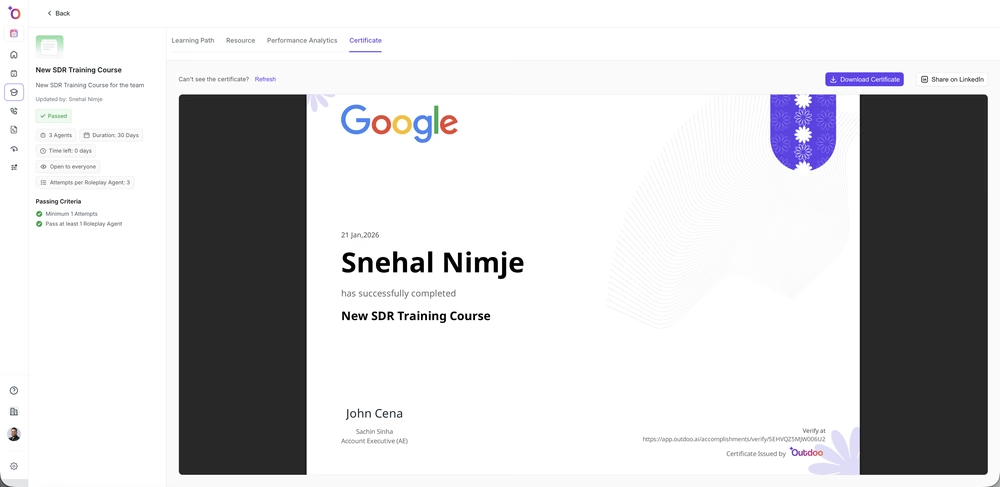

Scenario-based assessment, not quiz-based recall. Instead of asking "What are the three steps of our objection-handling framework?", a readiness assessment puts the rep into a simulated conversation where a buyer raises a specific objection. It scores whether the rep applies the framework effectively.

Competency rubrics tied to observable behavior. Rather than a binary pass/fail, readiness scoring maps to defined competencies: Did the rep acknowledge the concern before responding? Did they ask a clarifying question? Did they maintain the conversation's direction without defaulting to a script? Each of these is observable, coachable, and measurable. A practical starting rubric for an objection-handling scenario might score four dimensions on a 1-to-4 scale: acknowledgment of the concern, quality of the clarifying question, accuracy of the reframe, and strength of the next-step close.

A clear threshold that means something. "Certified" should not mean "finished the course." It should mean "demonstrated, in a realistic scenario, that they can perform this skill at a level we are confident putting in front of a customer." That is a higher bar, and it is the right one.

The distinction matters most at the moment of go-live. When a new hire finishes onboarding, the question should not be "Did they complete all assigned modules?" It should be "If this person takes a live call tomorrow, what is our confidence level that they will handle it well?" Readiness-based systems are built around that question. Completion-based systems cannot answer it.

Measuring Behavioral Change: What L&D Leaders Should Track

The metrics you report on should change the conversation with executive stakeholders, not just the dashboard. Leadership doesn't frame it as a training problem. They frame it as an ROI problem, and when a new VP asks what the certification budget actually produced, "completion rate" is rarely a satisfying answer.

Deprioritize:

- Course completion rates (still useful for compliance tracking, but no longer a proxy for preparedness)

- Quiz scores (retain as a knowledge baseline, but do not treat as a performance indicator)

- Time spent in training (measures effort, not outcome)

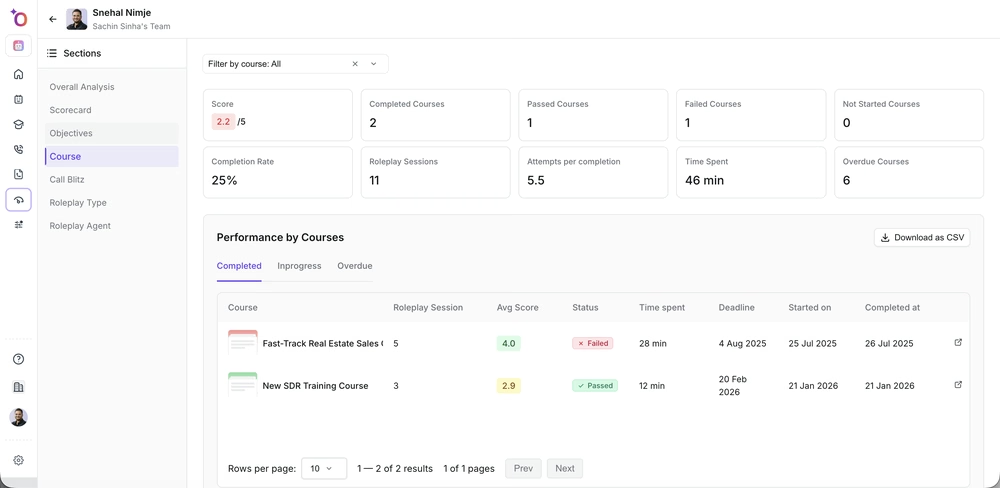

Prioritize:

- Scenario pass rates: What percentage of reps can demonstrate the target skill in a realistic assessment?

- Time to readiness: How quickly do new hires reach the performance threshold, not just finish the curriculum?

- Pre/post performance deltas: How do QA scores, win rates, or customer satisfaction metrics change after reps complete readiness certification versus completion-only certification?

- Coaching specificity: Can managers identify exactly which competency a rep is struggling with, or are they working from general impressions?

Instead of reporting "92% of the team completed Q3 training," you can report "78% of the team demonstrated readiness on our top three deal scenarios, up from 61% last quarter, and win rates in that cohort increased by 11 points." One of those statements justifies the training budget. The other just describes activity.

When the Shift Becomes Urgent

Not every team needs to overhaul its certification model tomorrow. But there are clear signals that the cost of staying with completion-based metrics has crossed the threshold from tolerable to dangerous.

Regulated conversations. If your reps handle HIPAA, financial services, fair housing, or pharmaceutical compliance interactions, a false-positive on certification can result in regulatory penalties, lawsuits, or license revocations. In these environments, "they passed the quiz" is not a defensible answer when something goes wrong.

High-value or complex sales. Enterprise deal cycles with multiple stakeholders, long timelines, and significant revenue at stake demand reps who can think on their feet. A certification that tests whether they memorized the pricing tiers does not prepare them for a CFO who challenges the ROI model in real time.

Rapid scaling or high turnover. When you are onboarding large cohorts quickly, whether for a BPO operation, a seasonal ramp, or hypergrowth hiring, the gap between "completed training" and "ready for live interactions" compounds fast. Every rep who goes live before they are truly ready creates downstream cost in churn, escalations, and brand damage.

Persistent coaching gaps despite high certification rates. This is the clearest diagnostic signal. If managers are still spending significant time coaching fundamentals that certification supposedly covered, your certification is not measuring what it claims to measure.

Choosing a Platform That Certifies Real Readiness

Most tools that claim to assess "skills" still just ask reps to pick the right answer from a list. Here is what to evaluate, ranked by importance.

Non-negotiable: Scenario-based practice that requires performance, not selection. The platform must put reps into situations where they have to produce a response, whether through AI-driven conversation simulations, structured role-plays, or recorded assessments. If the hardest thing a rep has to do is pick the correct answer from four options, you are still in the completion model.

High priority: Competency-level scoring with coaching visibility. Managers need to see not just "pass/fail" but which specific competencies each rep demonstrated or fell short on. This turns certification data into coaching data, which is where the real ROI lives.

High priority: Integration with your existing workflow. A readiness platform that lives outside your LMS, CRM, and coaching cadence will become another disconnected tool. Look for solutions that feed data into the systems managers already use.

Important but secondary: AI-driven feedback and scalability. For teams with more than 50 reps, the ability to run scenario-based assessments at scale without requiring a live evaluator for every session becomes a practical necessity. Outdoo is built around this model, using AI-driven practice scenarios to verify whether reps can actually perform before they are certified and surfacing competency-level data that managers can act on in coaching.

Worth asking about: Evidence of outcome correlation. Can the vendor show you data, from their own customers or from research, that their readiness assessments predict on-the-job performance better than traditional certification? If they cannot, you are trading one unvalidated signal for another.

The Bigger Shift: From Measuring Activity to Measuring Capability

Completion-based models treat training as a compliance exercise: did the rep do the thing we asked them to do? Readiness-based models treat training as a performance system: can the rep do the thing we need them to do?

That distinction ripples outward. It changes how you design curriculum, around scenarios rather than content modules. It changes how you allocate coaching time, targeted to specific skill gaps rather than general reinforcement. It changes how you make go-live decisions, based on demonstrated capability rather than calendar milestones. And it changes how you justify the training budget, tied to performance outcomes rather than activity metrics.

The teams that make this shift do not just get better data. They get better performance, because the certification process itself becomes a practice and feedback loop rather than a gate that reps pass through and forget.

For customer-facing enablement teams evaluating platforms, Outdoo is worth a close look because it treats certification as performance proof, not content consumption. The hardest part of this whole transition, though, is not the tooling. It is getting your organization to accept that a 95% pass rate might be the least informative number on your dashboard.

Frequently Asked Questions

Completion-based certification confirms that someone finished a course and passed a knowledge check. Verified readiness goes further by assessing whether a person can apply what they learned in realistic, pressure-filled scenarios, such as handling objections on a live call or navigating a compliance-sensitive conversation. The simplest test: if your certification can be passed by someone with a good memory and no actual skill, it is measuring completion, not readiness.

The most common objection is "our current program works fine." The fastest way to address it is with the Stage 1 audit: pull certification pass rates alongside QA scores or win rates for the same cohort. When managers see a 94% pass rate sitting next to flat or declining performance data, the conversation shifts from "why change?" to "what do we replace it with?" Start with a single team as a pilot rather than a full rollout. Managers who see their own reps improve become internal advocates faster than any executive mandate.

Start by observing how people perform in simulated or live scenarios that mirror the complexity of their actual role. This can include role-play evaluations, recorded call reviews scored against defined competency rubrics, or AI-driven practice environments that assess responses in context. Pairing these methods with pre- and post-training performance data from CRM outcomes or QA scores gives L&D leaders a clear picture of whether training actually shifted behavior. The key is measuring the same competency both before and after the intervention so the delta is attributable, not assumed.

The cost depends on whether you use live evaluators, AI-driven simulations, or a hybrid. Live role-plays scored by managers are effective but expensive beyond about 20 to 30 reps per cohort because of the time investment. AI-driven platforms reduce the per-assessment cost significantly and allow reps to practice multiple times without scheduling constraints. The real cost calculation, though, is comparative: what does it cost you when reps go live before they are ready? If you can quantify the downstream expense of early attrition, compliance incidents, or lost deals, the investment in scenario-based assessment almost always pays back within two quarters.

Look for a solution that goes beyond content delivery and quiz scoring to include scenario-based practice where reps must demonstrate skills in context, not just recall facts. Key criteria include the ability to simulate realistic conversations, score responses against specific competency frameworks, and surface data that managers can act on during coaching. You also want a platform that integrates into your existing workflow rather than adding another disconnected tool. Outdoo is built around this model, using AI-driven practice scenarios to verify whether reps can actually perform before they are certified.

.svg)