Most sales teams record every call. Very few actually learn from them.

The volume problem is real as managers cannot listen to every conversation, and the calls they do review are usually the deals that already closed or already lost. By then, the coaching window has passed.

Call scoring solves this by turning every conversation into structured, comparable data. According to Gartner, by 2026 the majority of customer-facing teams will use AI to score calls automatically, moving from sampling a handful of conversations to evaluating every one.

This guide covers what call scoring is, how the methods compare, what a strong scorecard actually includes, why most call scoring programs fail, and how to roll one out in a way that ties scores directly to pipeline performance.

What Is Call Scoring? (And Why It Matters in 2026)

Call scoring is a method for evaluating the quality of a sales or support conversation against a defined set of performance criteria. Each call receives a score based on how well the rep executed the behaviors that drive outcomes - things like discovery depth, objection handling, value articulation, and how clearly the next step was set.

The data captured comes from call recordings and transcripts, which are then evaluated either manually by managers or automatically by AI. While call scoring originated in contact centers, it is now standard practice across B2B sales, customer success, and revenue operations teams.

There are two layers to how a call gets scored:

Together, these give a complete picture of conversation quality. Below are the core dimensions most teams use when scoring sales calls in 2026:

The shift in 2026 is that scoring is no longer a manager activity. With AI doing the work, every call is evaluated, every rep gets feedback, and scoring becomes a continuous performance system rather than a periodic audit.

Manual vs Rule-Based vs AI Call Scoring: What's the Difference?

There are three core approaches to call scoring, and most teams use a combination as they mature. Understanding the trade-offs is critical because the wrong approach burns manager time without improving rep performance.

Manual scoring still has a place - managers reviewing one or two calls per rep per week to add qualitative depth. But it cannot scale. A team of 30 reps making 8 calls a day generates 1,200 calls a week. Even at 15 minutes each, that is 300 hours of manager time. No team has it.

Keyword-based scoring solves the volume problem but breaks on context. A rep can say "I understand your concern about pricing" and trigger the right keywords without actually addressing the objection well.

Generative AI scoring evaluates whether the rep actually handled the objection, looking at the full conversation flow, not just word matching. This is why most modern sales teams have moved past keyword systems entirely.

Why Most Call Scoring Programs Fail (And How to Fix Them)

Call scoring is widely adopted, but most programs underperform. Across the patterns we see, the failure modes are predictable.

1. Scoring criteria built once, never updated

Sales motions evolve. Messaging changes. New competitors enter. But scorecards built at the start of the year are still being used in Q4 with criteria that no longer reflect what wins deals. Strong programs review and refresh scorecards quarterly.

2. Scores never reach the rep

Managers score calls but reps don't see the results, or they see them in a separate system disconnected from their daily workflow. If a rep can't act on feedback within a day or two, the coaching impact is lost.

3. Scorecards measure activities, not outcomes

"Did the rep ask 5 questions" is an activity. "Did the questions surface quantified business impact" is an outcome. Scorecards built around activity counts produce reps who hit checkboxes without improving conversation quality. Data-driven coaching works only when scoring measures the right things.

4. No connection between score and pipeline

The point of scoring is to predict and improve revenue outcomes. If your scoring data sits in a coaching tool while pipeline data sits in your CRM, you have no way to know whether your highest-scoring reps actually convert better. Unified systems solve this.

5. Reviewer bias when humans score

Two managers scoring the same call will produce different scores. Add team favorites, recency bias, and subjective interpretation, and even well-intentioned manual scoring becomes inconsistent. AI removes this, every call is scored against the same rubric, every time.

6. Scoring without practice

Telling a rep their objection handling scored 4/10 doesn't help them get to 8/10 unless they have a way to practice. The teams that see real improvement pair scoring with AI roleplay so reps can immediately rehearse the exact behavior that scored low.

What Should a Sales Call Scorecard Include? (Free Template)

An effective scorecard is short, weighted, and tied to behaviors that predict deal progression. The template below works for most B2B discovery and demo calls. Customize the criteria and weights to match your sales motion.

The weights matter as much as the criteria. Discovery depth and pain quantification carry the most weight because they predict whether a deal progresses to evaluation. A high score on opening clarity but a low score on pain quantification means the call sounded good but didn't advance the deal.

Most modern sales platforms now ship with built-in scorecard templates that map directly to frameworks like MEDDIC, BANT, or SPIN. The advantage of using a methodology-aligned scorecard is that scoring becomes consistent across deals, regions, and segments.

How to Score Sales Calls Using MEDDIC, BANT, or SPIN

Frameworks give scoring structure. Instead of inventing criteria from scratch, mapping your scorecard to a methodology your team already uses ensures scoring criteria align with how reps qualify and progress deals.

MEDDIC scoring

Score each call against the six MEDDIC components: Metrics, Economic Buyer, Decision Criteria, Decision Process, Identify Pain, Champion. Each component becomes a section of the scorecard with sub-criteria. Strong for enterprise complex deals.

BANT scoring

Evaluate Budget, Authority, Need, and Timeline. Faster to score and best suited for transactional or mid-market motions where qualification needs to happen quickly.

SPIN scoring

Score based on the type and sequence of questions asked: Situation, Problem, Implication, Need-payoff. Most useful for discovery-heavy sales motions where question quality determines call quality.

The right framework depends on your sales motion. Teams running multiple motions often configure separate scorecards for each - one for SDR cold calls (BANT-light), one for AE discovery (MEDDIC), one for enterprise multi-stakeholder calls (custom).

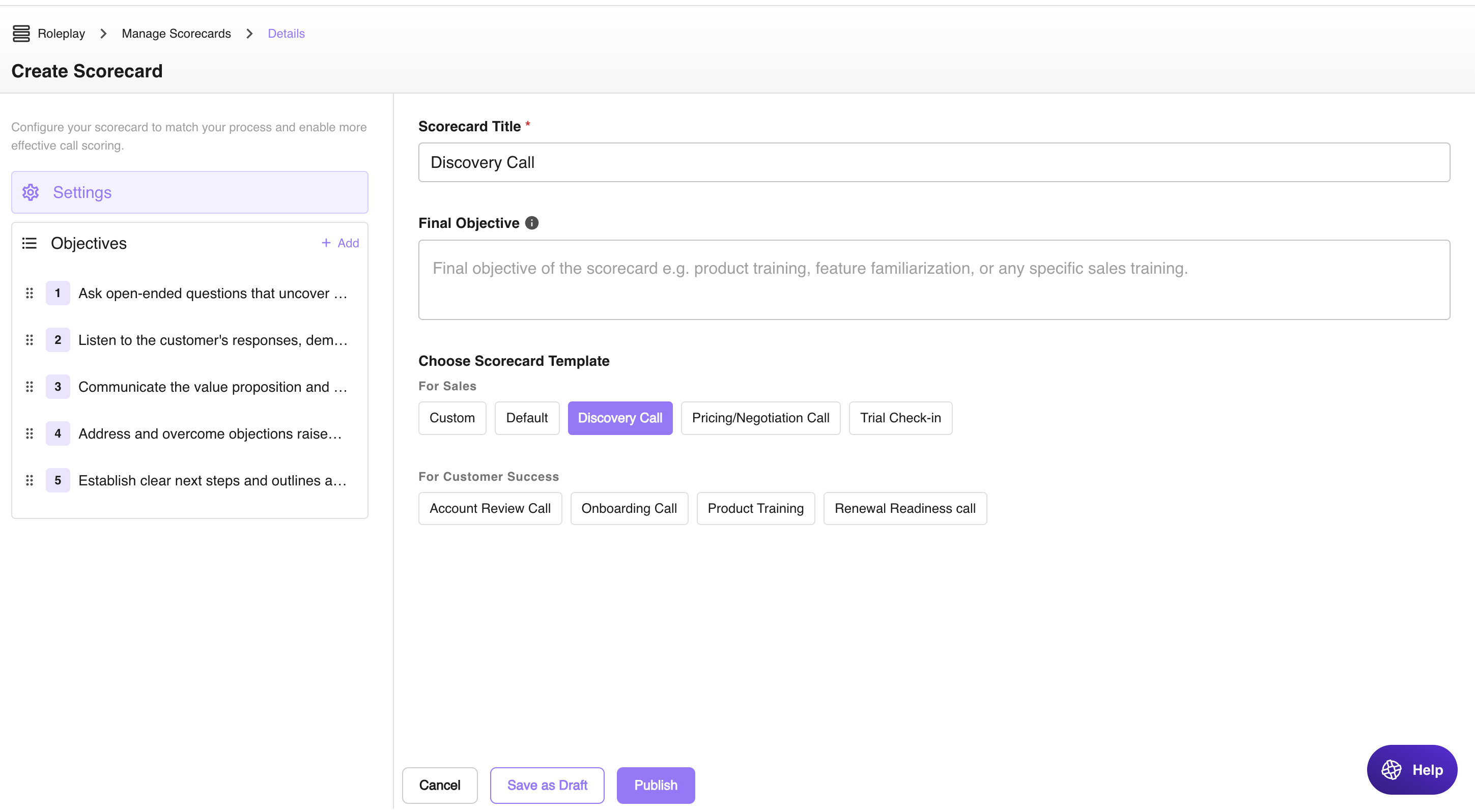

See how easy it is to create a custom scorecard with Outdoo using your own playbooks, MEDDIC, BANT, or other frameworks:

Call Scoring vs Lead Scoring: What's the Difference?

These two terms get confused often. Both produce scores, but they measure entirely different things and serve different teams.

The two are complementary. Lead scoring tells you which prospects deserve a call. Call scoring tells you whether the call was any good once it happened.

How to Roll Out AI Call Scoring in Your Team (Step-by-Step)

Most call scoring deployments fail not because of the technology but because of weak rollout. Here is the sequence that works.

Step 1: Define what you're scoring for

Start with the outcome. Are you trying to improve discovery quality? Reduce ramp time? Increase win rates? The criteria you score against should map directly to that outcome. Generic scorecards that try to measure everything end up measuring nothing useful.

Step 2: Build a methodology-aligned scorecard

Use a framework your reps already understand, MEDDIC, BANT, SPIN, or your internal sales playbook. Limit the scorecard to 5-7 criteria. More than that and reviewer attention drops sharply. Add weights so reps know which behaviors matter most. Sales coaching works best when reps know exactly what they're being measured on.

Here's how easy it is to customize scorecards is Outdoo for specific sales scenarios:

Step 3: Connect scoring to your CRM

Scores stuck in a separate coaching tool don't change behavior. Push every score into the CRM deal record so account executives, managers, and revenue operations see scoring data in the same place they manage pipeline. This is also what makes it possible to correlate scoring patterns with deal outcomes.

Step 4: Score every call, not a sample

The biggest unlock from AI is being able to score 100% of calls. Sampling produces statistical noise.You might miss the calls that actually matter. Full coverage means every rep gets feedback on every call, and managers get a complete view of team performance.

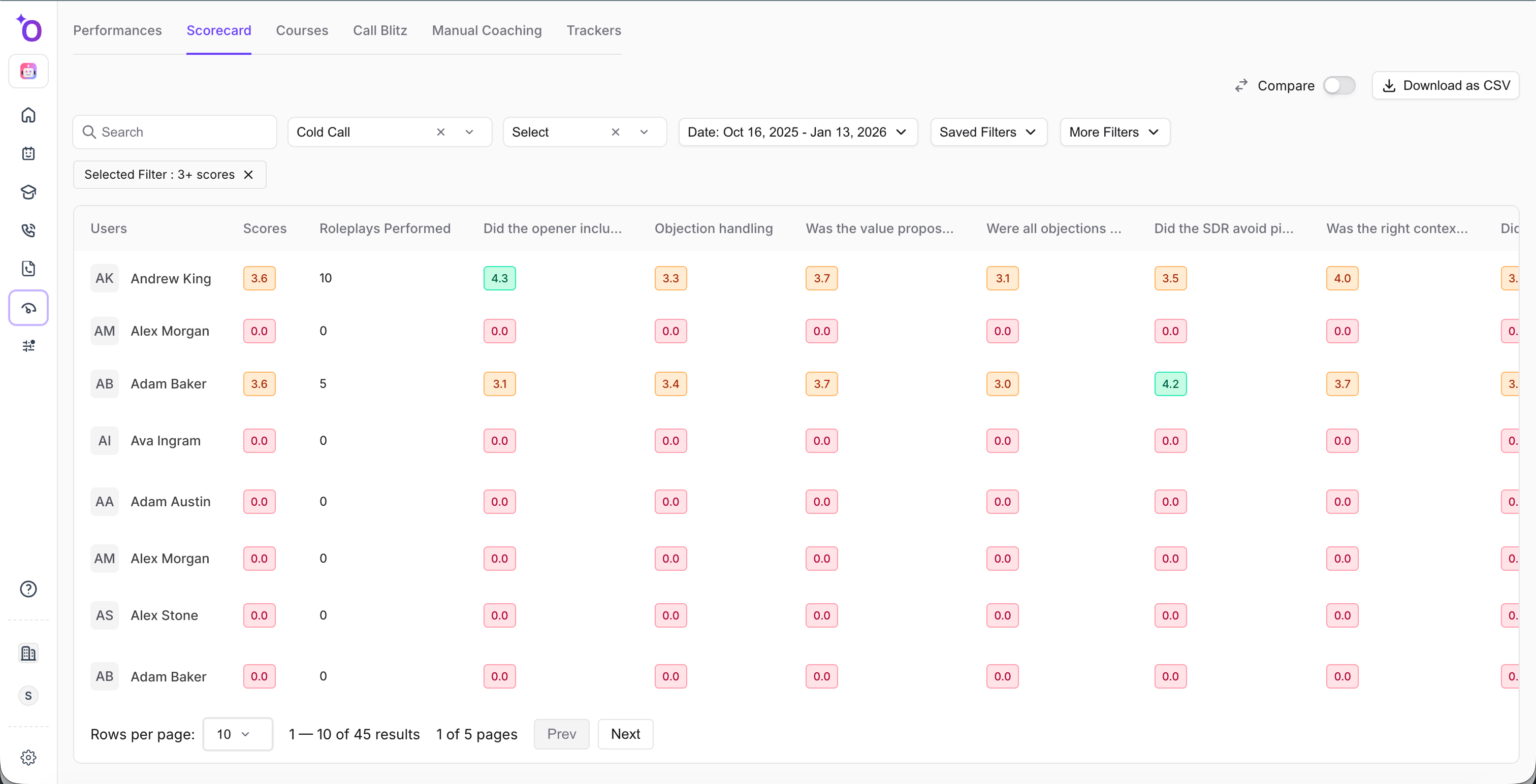

Here's how call are scored and evaluated in Outdoo AI:

Step 5: Tie scoring to coaching and practice

This is the step most teams skip. Identifying that a rep scored low on objection handling is only useful if they have a way to practice and improve. Pair scoring with targeted AI roleplay sessions that focus on the specific behaviors that scored low. This is what turns scoring from a measurement system into a performance system.

Step 6: Review and refresh scorecards quarterly

Sales motions evolve. Update scoring criteria every quarter based on what is actually winning deals. Look at the calls that converted to qualified opportunities and identify the behaviors that showed up consistently. Add those to the scorecard. Drop criteria that don't correlate with outcomes.

Call Scoring Metrics That Actually Predict Pipeline Performance

Not every metric a scoring tool can produce is worth tracking. The ones that matter are the ones that correlate with deal progression, conversion, and win rates.

1. Discovery depth → progression to demo

Calls where reps surface quantified business impact early are significantly more likely to progress to a second meeting. Discovery depth is one of the strongest leading indicators of deal velocity.

2. Talk-to-listen ratio → buyer engagement

When reps dominate the conversation (above 60% rep talk time), buyer engagement drops and meeting conversion suffers. The ideal ratio varies by call type, discovery should skew buyer-heavy, demo can be more balanced.

3. Stakeholder coverage → win rate

Single-threaded deals, where reps only engage one contact and onvert at a fraction of the rate of multi-threaded deals. Scoring stakeholder mapping during discovery helps surface single-threading early, while there's still time to expand. Pipeline reviews that incorporate stakeholder coverage signals are far more accurate.

4. Next-step clarity → deal velocity

Calls that end with a specific scheduled next step (date, attendees, agenda) progress significantly faster than calls that end with vague follow-up commitments. This single metric is one of the simplest and most reliable predictors of deal momentum.

5. Methodology adherence → forecast accuracy

Deals that follow a consistent qualification framework throughout discovery produce more accurate forecasts. When call scoring tracks methodology adherence at every stage, RevOps gains a leading indicator of which deals will actually close in-quarter.

5 Best Practices for Effective Call Scoring

Once your scoring system is in place, the operational practices below determine whether reps actually improve.

How Outdoo Approaches AI Call Scoring

Outdoo is built for teams that want call scoring to drive measurable behavior change, not just generate dashboards. The platform connects scoring, coaching, and practice into one closed-loop system.

Here is what makes the approach different from standalone scoring tools:

The shift Outdoo enables is from scoring as an audit to scoring as a continuous performance system. Reps see their scores within hours, not weeks. Managers spend coaching time on insights rather than chasing recordings. And enablement teams get visibility into which behaviors actually drive revenue.

For a deeper look at the full platform capabilities, see AI Call Scoring and AI Coaching.

Conclusion

Call scoring works when it stops being a manager activity and becomes a continuous performance system. The technology is solved, every call can now be scored automatically, against any framework, with consistent and unbiased evaluation.

What still separates teams that improve from teams that don't is whether scoring is connected to coaching, whether reps can act on feedback within days, and whether practice closes the loop on the behaviors that scored low.

Done well, call scoring becomes a self-reinforcing system: scores identify gaps, coaching addresses them, practice reinforces the new behavior, and the next set of scores validates whether it worked. Done poorly, it produces dashboards no one acts on. The difference is rarely the tool, it's how the tool is used.

To get started with Outdoo, schedule a demo today!

Frequently Asked Questions

Call scoring evaluates sales calls against key metrics like listening, questioning, and closing skills. It helps identify areas for improvement, optimize coaching, and improve customer outcomes.

Call scoring is the process of evaluating sales calls against a defined set of criteria such as discovery depth, objection handling, talk-to-listen ratio, and next-step clarity. The score measures conversation quality and is used to identify coaching opportunities, replicate top performer behaviors, and track rep improvement over time. AI now allows every call to be scored automatically rather than sampled by managers.

Calls can be scored manually, through keyword-based systems, or using AI, which provides scalable, context-aware insights like sentiment and intent analysis.

Effective call scoring should correlate with pipeline outcomes. Track whether high-scoring calls progress to the next stage at higher rates, whether high-scoring reps hit quota more consistently, and whether coaching tied to scoring data improves rep performance over time. If scoring data does not predict deal progression, the criteria need to be refined.

Outdoo goes beyond traditional call scoring by combining AI-driven analysis with AI sales roleplay and coaching. Reps can practice real scenarios, get personalized feedback, and track their improvement over time, while managers gain actionable insights to replicate top-performer behaviors and optimize every customer interaction.

.svg)