A company spends $2,000 per rep on a two-day sales methodology workshop. Reps leave energized. Managers feel good about the investment. Six weeks later, discovery calls sound exactly the same as they did before. The playbook sits unopened in a shared drive. The new framework shows up in exactly zero recorded calls.

This pattern repeats in sales organizations of every size, and most enablement teams already know it. What they lack is a clear diagnosis of why it keeps happening and a practical alternative that produces lasting behavior change. The answer is not more content, better slides, or a different methodology. It is a fundamental shift in how practice is structured.

The enablement teams that break this cycle share a consistent pattern: they don't buy better training. They build practice systems. That distinction is what separates programs that change behavior from programs that just feel productive.

The Real Reasons Training Doesn't Stick

Memory decay gets all the blame, but it is only one of four forces working against even excellent training. The Ebbinghaus forgetting curve shows people lose roughly 70% of new information within a week and up to 87% within a month without reinforcement. That stat matters, but it does not explain the full problem.

The transfer gap is the biggest silent killer. Classroom exercises bear almost no resemblance to the pressure of a live deal conversation. A rep who can explain the MEDDIC framework on a whiteboard may freeze when a CFO pushes back on pricing in a real meeting. Knowing a framework and executing it under cognitive load are entirely different skills. Most training programs only test the first.

Manager reinforcement rarely happens. Frontline managers are the single most important lever for behavior change after training, yet most like in the below reddit post are stretched across pipeline reviews, forecasting, hiring, and their own quota pressure.

In practice, "manager reinforcement" at most companies means a Slack message saying "remember to use the new framework" followed by silence. Reps leave a workshop with no structured way to practice what they learned and no one holding them accountable for applying it. The training becomes an event rather than a process.

What good manager involvement actually looks like is far more specific: ten minutes per week reviewing two or three recorded practice drills per rep, with written feedback on one targeted behavior. That is the minimum effective dose. Anything less, and the manager's role degrades into vague encouragement that reps learn to ignore.

Incentive structures work against new behaviors. A rep who already hits 90% of quota with their existing approach has little motivation to adopt an unfamiliar framework that will feel awkward for weeks. Without a practice environment where reps can stumble safely, the rational choice is to revert to what already works well enough.

Enablement teams mistake completion for competency. When the success metric is "percentage of reps who finished the certification," the program optimizes for attendance, not behavior change. A team with 100% completion and zero observable change in call quality has not succeeded. It has produced a vanity metric.

Some will argue that better coaching, not more practice, is the real solution. Coaching is essential, but it is insufficient alone. A frontline manager with ten direct reports and one hour a week for coaching gets six minutes per rep. Six minutes cannot create the repetition volume needed to rewire a behavior. Coaching tells a rep what to fix. Practice is where they actually fix it.

The core issue is not that sales training content is bad. Most methodologies are sound. The issue is that organizations treat knowledge transfer as the finish line when it is actually the starting line. What comes after, structured and repeated skill practice, is where behavior actually changes.

The Knowledge-to-Skill Gap Most Enablement Teams Miss

A pilot who has memorized every procedure in the manual still needs hundreds of hours in a flight simulator before anyone trusts them with passengers. Sales works the same way, except most organizations provide almost none of that practice time.

To make this concrete, here is a study we did with a prospect: a 200-person SaaS sales team rolled out a new discovery framework through a standard two-day workshop. Post-training quiz scores averaged 88%. But call review data showed that the framework's recommended open-ended discovery questions appeared in only 22% of recorded calls four weeks later. The team then introduced daily five-minute roleplay drills targeting one discovery question type per session. After six weeks of daily drills, framework usage on recorded calls rose to 58%. The content was identical. The only variable was structured repetition.

Three signs your team has a practice problem, not a content problem:

- Reps can explain the methodology but don't use it on recorded calls. If post-training call reviews show the same habits as before, the issue is not comprehension. It is execution under pressure.

- New hires ramp slowly despite thorough onboarding content. When reps have access to everything they need to know but still take months to perform, the bottleneck is practice volume, not information volume.

- Coaching conversations keep addressing the same skill gaps quarter after quarter. Repetitive coaching feedback signals that reps are not getting enough reps between sessions to actually rewire the behavior.

Quick diagnostic you can run this week: Pull the last ten coaching notes your managers wrote for their team. If three or more reps received feedback on the same skill gap that was addressed in training more than 60 days ago, you have a practice deficit.

How Micro-Learning Roleplay Produces Actual Behavior Change

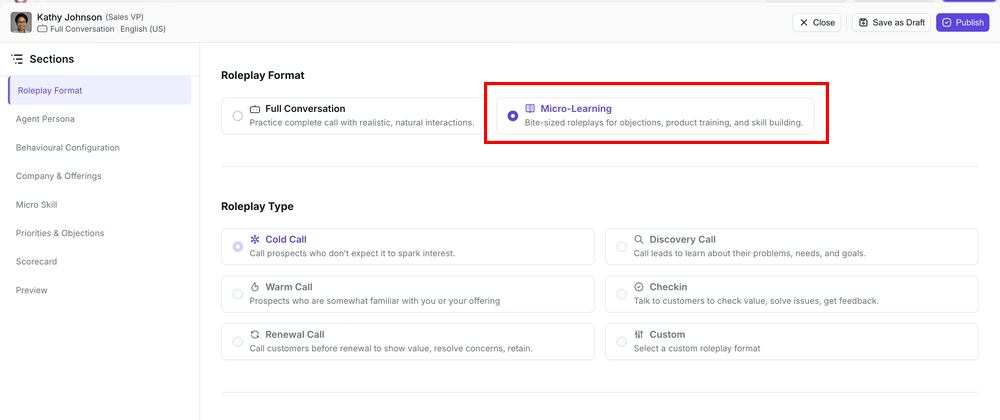

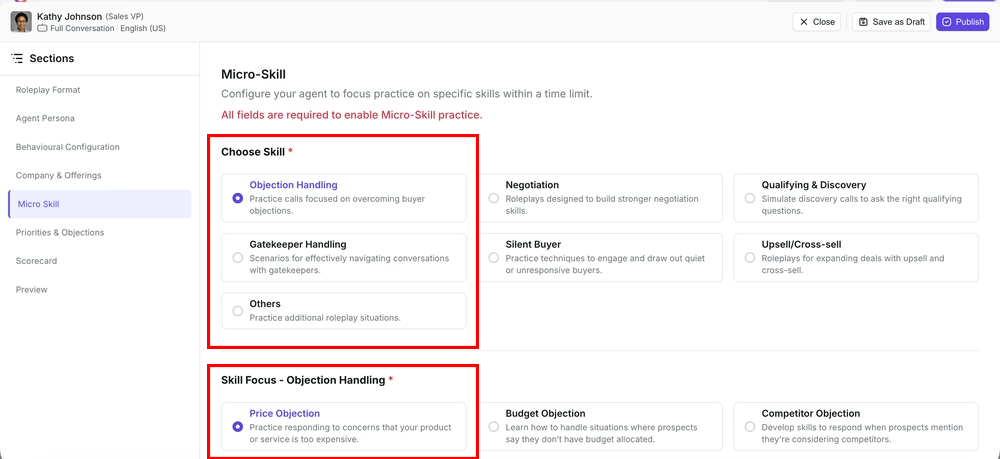

Full call simulations last 20 to 30 minutes, happen infrequently, and test too many skills at once for any single one to improve. Micro-learning roleplay flips that model. A rep spends five minutes handling a pricing objection or practicing a specific discovery question under pressure. The next day, they drill a different scenario. Over a week, they accumulate practice across five or six discrete skills without sitting through a marathon simulation.

This approach mirrors how procedural memory forms: through high-volume repetition of a specific behavior rather than passive review of an entire methodology. Musicians practice scales before performing concertos. Surgeons drill individual suture techniques before performing full procedures.

Here is what a single well-designed drill actually looks like:

That is the level of specificity a drill needs. A generic "handle an objection" prompt teaches nothing. A drill that forces a rep to execute a specific sequence of behaviors under realistic pressure builds the muscle memory that transfers to live calls.

A realistic weekly micro-learning schedule for a sales team:

Total time investment: roughly 30 minutes per rep per week. Compare that to a full-day offsite that pulls reps out of selling entirely and produces knowledge that decays within a month.

When to Introduce Structured Practice Into Your Enablement Program

Two to four weeks after a new framework has been introduced is the highest-return window for structured practice. At that point, reps can articulate the key concepts but still struggle to apply them under live deal pressure. That gap between "I understand it" and "I can execute it when a buyer goes off script" is exactly what practice closes.

Introducing practice at this stage ensures reps drill the right behaviors rather than reinforce incorrect habits. Teams that wait too long, hoping reps will "figure it out on live calls," pay the cost in lost deals and extended ramp times. Teams that introduce practice too early, before reps share a vocabulary, end up with reps practicing the wrong things.

What to measure (and what to stop measuring):

Stop treating training completion rate as a success metric. It tells you nothing about behavior change. Track these three indicators instead:

- Framework element usage on recorded calls. Use your call recording platform to tag specific behaviors (e.g., "opened with a business-relevant question," "anchored to ROI before discussing price"). Track the percentage of calls where these behaviors appear at weeks 2, 4, and 8 post-training. A meaningful target: 50% or higher usage rate by week 6.

- Drill engagement rate. Not completion rate, but the percentage of assigned drills that reps actually attempt. If this drops below 70% in week 2, you have an adoption problem that needs manager reinforcement, not more content.

- Manager-rated competency on targeted skills. Ask managers to rate each rep on a 1 to 4 scale for the specific skill being drilled, once before the practice program starts and once at the four-week mark. Aggregate the shift. This is the closest proxy to "did behavior actually change?"

Choosing a Practice Platform That Fits Your Team

Most roleplay tools on the market were designed for assessment, not daily practice. They default to full call simulations lasting 20 to 30 minutes, which is useful for evaluating overall call management but too infrequent and too long to build automatic responses. For mid-market sales teams with a coaching-focused culture, short-drill platforms are the stronger choice because they fit into the workflow reps already have rather than competing with it.

Outdoo is one platform built specifically around this micro-learning roleplay model. It offers AI-driven buyer scenarios that sales managers can align to their team's frameworks and roll out without heavy configuration, which solves the adoption challenge that kills most practice initiatives before they gain traction. It is worth evaluating alongside any other tools on your shortlist, particularly if your team's bottleneck is execution rather than knowledge.

Conclusion

The sales training industry has spent decades optimizing knowledge transfer: better content, sharper facilitators, more polished slide decks. That investment isn't wasted, but it's incomplete.

Here is the threshold that separates teams that actually change behavior from those that just train: reps need a minimum of 20 focused practice reps on a single skill before it starts appearing reliably on live calls. Quarterly workshops provide two or three at best. Daily five-minute drills hit that threshold in under a month.

If you recognize this pattern, where training content is solid but on-call execution doesn't match, the fix is not another workshop. It is a structured practice program with enough repetition volume to make new skills automatic. For enablement leaders building that program, Outdoo is worth a serious look; its short-drill format and manager-assignable scenarios solve the specific workflow and adoption problems that stall most practice initiatives.

The teams that pull ahead in the next two years will not be the ones with the best training content. They will be the ones that practiced more.

Frequently Asked Questions

Knowledge transfer covers frameworks, product messaging, playbooks, and methodology. Skill practice is repeated execution of specific behaviors under realistic conditions until they become automatic. A useful test: if your reps can pass a written quiz on the methodology but do not use it on recorded calls, you have a knowledge-to-skill gap, not a knowledge gap. The practical implication is budget allocation: if more than half your enablement spend goes to content creation and less than 20% goes to structured practice, you are likely over-indexed on knowledge and under-indexed on skill.

Start by framing drills as performance tools, not compliance tasks. Reps who see practice as "more training homework" will disengage within a week. The most effective approach is to pilot with your top performers first, not your struggling reps. When a team's best closer publicly endorses five-minute daily drills because they sharpened her objection handling, peer adoption follows naturally. Keep drills under five minutes, make them opt-in during the first two weeks, and share anonymized before-and-after call quality data so reps can see the connection between practice and pipeline outcomes.

It breaks full simulations into short drills that isolate one skill at a time. A rep might drill handling a pricing objection in a single five-minute session. This mirrors how procedural memory forms: through high-volume repetition of a specific behavior rather than passive review. Over the course of a month, daily five-minute drills add up to more total practice reps than most teams get in a full quarter of traditional training. The compounding effect matters: each session reinforces the neural pathways built in prior sessions, so a rep who drills discovery questions five times is meaningfully sharper than one who practiced once for 25 minutes.

Wait until foundational knowledge transfer is complete and reps share a common vocabulary around your methodology. Once reps can articulate the framework but still struggle to apply it under live deal pressure, that gap signals the right moment for structured practice. For most teams, this window opens two to four weeks after a new methodology is introduced. Introducing practice too early leads to reps drilling bad habits. The test: can 80% of your team explain the core framework unprompted? If yes, they are ready for drills. If not, finish the knowledge phase first.

Prioritize platforms that support short, targeted practice sessions rather than only full call simulations. Look for realistic buyer responses that adapt to what the rep actually says, built-in coaching feedback that references your methodology, and easy integration into the weekly workflow. Outdoo is one platform designed around this micro-learning roleplay model, offering AI-driven buyer scenarios that managers can align to their team's frameworks without heavy setup. The deciding factor should be whether the tool makes daily practice easy enough that reps actually do it. Run a two-week pilot with one team before committing to an org-wide rollout, and measure drill engagement rate alongside call quality scores to validate the investment.

.svg)