SaaS discovery calls and roleplay training determine whether a deal is real or should be dropped early, because this is the point where problem depth, business impact and ownership are validated in a live conversation.

In SaaS, this moment defines deal direction, since deals that enter pipeline without a quantified problem, a clear owner and a defined consequence rarely convert later in the cycle. Based on internal assessments across hundreds of discovery conversations, a consistent pattern emerges: deals without a clearly articulated business impact and defined ownership are significantly more likely to stall before reaching evaluation.

High-performing teams treat discovery as diagnosis rather than a checklist, focusing on measurable impact such as revenue leakage, delayed decision cycles or manual inefficiencies that consume team capacity.

This shifts how reps operate, as questions move from generic prompts to structured probes that uncover how the problem affects pipeline, productivity or cost, and what happens if it remains unresolved.

The impact compounds across the revenue engine, where strong discovery improves pipeline quality and forecast accuracy because each opportunity is anchored in verified pain, stakeholder alignment and a defined next step.

It also creates usable operational data, where patterns in question depth, talk-to-listen balance and how well reps quantify impact reveal why certain deals progress while others stall. Weak discovery introduces predictable failure points, where deals enter pipeline without urgency, without a clear problem owner and without a quantified cost of inaction, which leads to stalled conversations during evaluation or procurement.

In these cases, the issue is not effort but lack of rigor, as reps gather information but fail to build a business case that justifies change.

Teams that improve discovery standardize preparation, questioning frameworks and signal interpretation across the funnel, which turns discovery from an individual skill into a repeatable system that directly influences revenue outcomes.

SaaS Discovery Call Frameworks and Metrics

1. Discovery Frameworks and Metrics

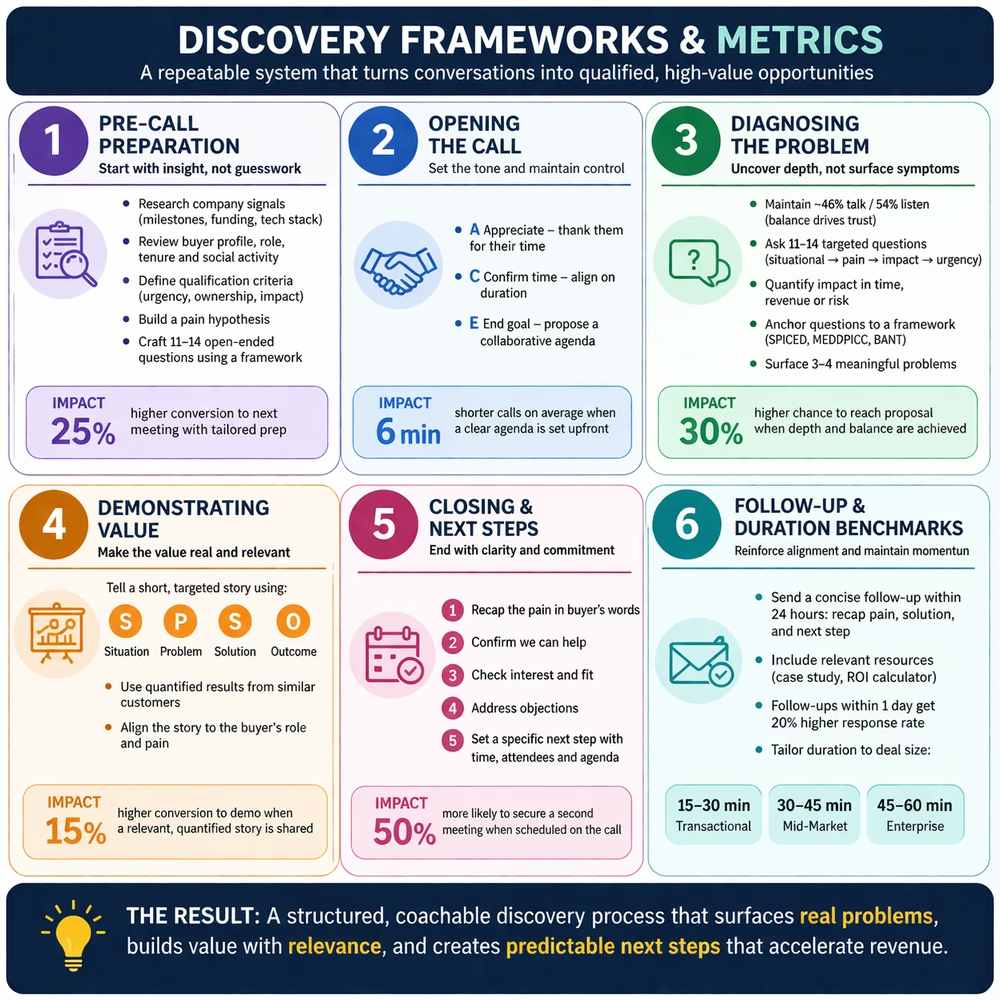

A consistent framework turns discovery into a repeatable system that improves qualification accuracy and deal progression, because when calls follow a defined sequence such as prepare, open, diagnose, demonstrate, close and follow up, reps know what signals to capture and buyers understand how the conversation will unfold.

Our internal analysis of hundreds of SaaS discovery calls shows that deals handled within a structured approach move faster from qualification to evaluation, mainly because elements like problem ownership, quantified impact and next steps are established early instead of being uncovered later.

2. Pre-Call Preparation

Preparation starts before the call, where top performers build a working hypothesis about the account instead of entering without context, combining company research with role-specific assumptions about likely challenges.

They study company signals such as funding, hiring trends, product launches and market positioning, then connect those signals to possible pressure points like pipeline gaps, inefficiencies or expansion targets.

At the individual level, they assess the buyer's role, tenure and activity to understand what that person is responsible for and how they are measured, which allows questions to be framed around outcomes rather than tasks.

They then define qualification criteria upfront, including urgency, ownership and business impact, and prepare 11 to 14 open-ended questions aligned to frameworks like MEDDPICC or SPICED, ensuring coverage of metrics, decision process and priorities.

In our internal assessment, calls where reps enter with a clear hypothesis and tailored questions consistently reach deeper insights early, while unprepared calls stay at surface level and require additional meetings to reach the same clarity.

The trade-off is time investment, since deep preparation can take up to an hour, which is why strong teams standardize research workflows and reuse insights across similar accounts to maintain efficiency.

3. Opening the Call

The opening shapes how the conversation unfolds, because this is where structure is either established or lost depending on how clearly expectations are set.

The ACE model, Appreciate, Confirm time and End goal, works because it aligns both sides without adding friction, positioning the call as a shared diagnostic rather than a one-sided interrogation.

A strong opening also signals preparation, especially when the rep references a relevant insight tied to the prospect's context, which immediately differentiates the conversation from generic outreach.

In our analysis, calls that begin with a clear agenda and contextual framing move into meaningful discussion faster, while those without structure spend time aligning before reaching substance.

The nuance lies in execution, since overly scripted openings can feel rigid, so experienced reps maintain structure while adapting tone and phrasing to keep the conversation natural.

4. Diagnosing the Business Problem

Diagnosis is where discovery creates or loses leverage, because this is the stage where the problem is either clearly defined and quantified or left vague.

Top performers maintain a balanced talk-to-listen ratio, allowing the buyer to explain their situation while the rep guides the conversation through structured questioning.

They sequence questions intentionally, starting with current state, then moving to pain, followed by impact and finally urgency, ensuring that each layer builds on the previous one.

The key difference lies in how impact is handled, since strong reps push beyond identifying problems and quantify them in terms of time, revenue or missed opportunities, which turns abstract pain into a concrete business case.

Our internal data shows that deals where impact is clearly quantified during discovery are more likely to progress, while those that remain at qualitative pain statements often stall later due to lack of urgency.

Frameworks provide structure, but effective reps combine them with active listening and follow-up questions that adapt to the conversation, ensuring depth without sounding mechanical.

5. Demonstrating Value through Storytelling

Once the problem is defined, value must be anchored in real outcomes rather than presented as features, which is where storytelling becomes effective.

A concise narrative that follows situation, problem, solution and outcome allows the buyer to relate their context to a real example, especially when the outcome includes measurable results.

The effectiveness of storytelling depends on relevance and precision, since generic examples do not resonate, while targeted stories that mirror the buyer's role and industry create alignment.

In our analysis, discovery calls that include at least one relevant and quantified story are more likely to move forward, as they help the buyer visualize the impact of change.

The nuance is in restraint, since overusing stories can shift focus away from diagnosis, so strong reps use them selectively to reinforce key points.

6. Closing and Next Steps

The final stage determines whether the conversation leads to momentum, because without a clear next step, even well-executed discovery calls lose direction.

A structured closing sequence ensures alignment by summarizing the problem, confirming the ability to help, checking for interest and setting a specific next step.

High-performing reps are precise when proposing next steps, including participants, agenda and timing, which reduces friction and increases follow-through.

Our internal review shows that when the next meeting is scheduled during the call, progression to the next stage increases significantly compared to cases where scheduling is deferred.

The challenge is often behavioral, as reps may hesitate to ask for commitment, but clarity at this stage prevents ambiguity later in the process.

7. Follow-Up and Duration Benchmarks

Follow-up reinforces what was established during the call, ensuring both sides remain aligned on the problem, solution and next steps.

A concise follow-up within 24 hours that summarizes key points and confirms actions helps maintain momentum and reduces the risk of misalignment.

Including relevant resources tailored to the discussion adds value, especially when those resources directly support the identified problem.

Timing matters, as follow-ups sent within a day receive higher engagement than delayed responses, which often lose context.

Call duration should match deal complexity, with shorter calls for transactional deals and longer sessions for enterprise discussions, while deviations often indicate either lack of preparation or insufficient depth.

SaaS Discovery Call Roleplay Scenarios and Practical Prompts

Frameworks define what good discovery looks like, but roleplay determines whether reps can execute under real pressure, because live conversations rarely follow structure and buyers bring interruptions, skepticism and competing priorities into every call.

In our internal assessment of discovery calls and training simulations, a clear pattern emerges: reps who practice against realistic scenarios are significantly better at maintaining structure while adapting to buyer behavior, which directly impacts deal progression and call quality.

Below are four high-frequency SaaS discovery scenarios, each mapped to real buyer dynamics and paired with a detailed prompt that can be used in tools like ChatGPT or Claude to simulate the interaction.

1. Technical Buyer with Limited Time

Technical stakeholders such as CTOs or engineering leaders often approach discovery with a narrow focus on feasibility, security and integration, while operating under strict time constraints, which compresses the window for meaningful discovery.

The challenge for reps is not just answering technical questions but connecting those answers to business impact without losing momentum, since conversations that stay purely technical rarely progress beyond evaluation.

What this tests

- Ability to stay concise under pressure

- Translating technical responses into business impact

- Maintaining control despite interruptions

2. Multi-Stakeholder Discovery

In most SaaS deals, the person on the call is only one part of the decision process, while other stakeholders influence budget, security, procurement and final approval.

The risk is incomplete discovery, where the rep focuses only on the current contact and fails to uncover the broader buying group, which often leads to delays or late-stage objections.

What this tests

- Stakeholder mapping and influence identification

- Understanding decision process and approval flow

- Early detection of potential blockers

3. Reluctant or Defensive Prospect

Some buyers enter discovery calls guarded, either due to prior negative experiences or internal uncertainty, which results in limited information sharing and low engagement.

In these situations, pushing harder typically reduces trust, while passive questioning leads to shallow discovery, making this one of the most difficult scenarios to navigate.

What this tests

- Building trust under resistance

- Asking layered, context-aware questions

- Adjusting pace based on buyer behavior

4. Just Looking Buyer with Hidden Urgency

Buyers often position themselves as being in research mode, while underlying signals suggest urgency driven by internal deadlines, operational inefficiencies or external pressures.

The challenge is identifying and validating these signals without pushing too aggressively, which requires careful listening and subtle probing.

What this tests

- Identifying hidden urgency signals

- Connecting pain to timeline and impact

- Balancing curiosity with restraint

Where Generic AI Practice Falls Short

These prompts can simulate realistic conversations, but generic AI tools lack context about your product, your customers and your historical deal patterns, which limits the depth and relevance of practice.

They also do not track performance over time, meaning reps cannot see whether their questioning, positioning or closing improves across sessions, which reduces the ability to build consistent capability.

As a result, generic practice improves conversational fluency but does not ensure that those skills translate into better outcomes in real discovery calls.

Structured Practice and Performance Measurement

To make roleplay effective, practice must be connected to real conversations, measurable signals and continuous feedback, so that improvement is not subjective but based on observable changes in behavior.

This involves creating scenarios from real calls and transcripts, running simulations that reflect actual buyer dynamics, evaluating performance across dimensions like discovery depth, objection handling and value articulation, and reinforcing weak areas through targeted repetition.

Over time, this approach builds consistency, where reps are not only familiar with frameworks but can apply them under different conditions, turning discovery into a controlled, repeatable capability that improves pipeline quality and deal progression.

Create Custom SaaS Discovery Roleplay Scenarios and AI Agents

Generic prompts are useful for early experimentation, but they break in real discovery environments because they lack context, continuity and performance visibility.

Reps may improve conversational fluency, but without exposure to real buyer behavior, stakeholder dynamics and deal context, that improvement rarely translates into consistent execution in live calls.

Why Generic Prompts Break at Scale

Tools like ChatGPT or Claude generate responses based on a single prompt, which resets every session and ignores prior interactions, deal progression and buyer history.

They do not incorporate real customer language, multi-stakeholder complexity, deal stage context or performance tracking.

This leads to practice that feels realistic in isolation but fails under real discovery conditions where conversations evolve dynamically.

What Contextual Roleplay Actually Means

Contextual roleplay is defined by structured inputs that mirror real deals, not by free-form prompts.

It is built using persona-specific behavior, company and industry context, real pain points and buying signals, and defined conversation objectives.

This ensures that reps are not practicing hypothetical conversations, but preparing for situations they will encounter in actual discovery calls.

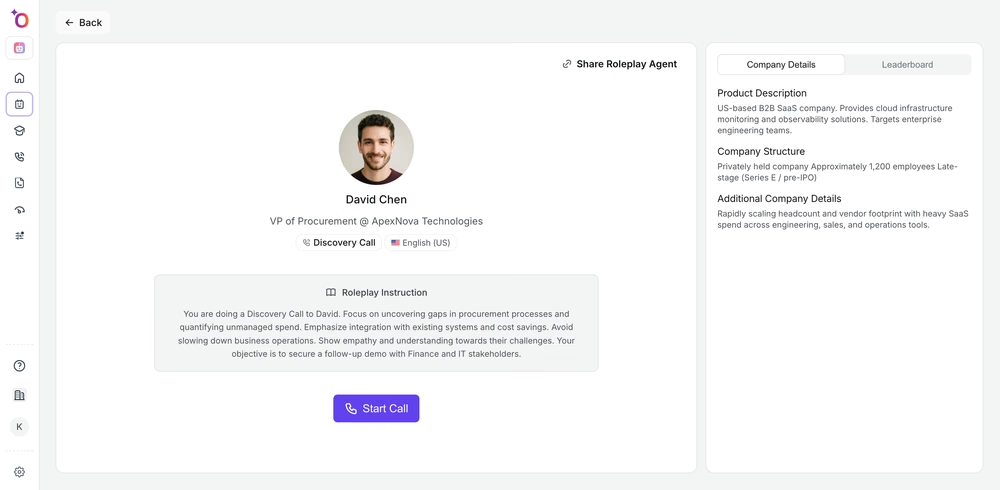

Example Scenario: Enterprise Procurement Discovery Call

To illustrate how contextual roleplay works, consider the following scenario.

You are speaking with David Chen, VP of Procurement at ApexNova Technologies, a late-stage B2B SaaS company scaling toward IPO, where procurement is decentralized, SaaS spend is fragmented and leadership is pushing for cost control and visibility.

This is a high-stakes discovery call involving CFO-driven cost pressure, lack of spend visibility, vendor sprawl and duplication, and multi-stakeholder decision complexity.

The objective is to uncover gaps, quantify impact and secure a follow-up with Finance and IT stakeholders.

Step-by-Step: Creating Roleplay Scenarios in Outdoo

Step 1: Create a Roleplay Agent

Start from the roleplay agents dashboard, where all scenarios and personas are managed. This is where teams move from isolated prompts to a structured system.

Why this step exists

When roleplays are created ad hoc, scenarios cannot be reused or improved over time. Centralizing agent creation ensures that teams build on previous scenarios instead of starting from scratch each time.

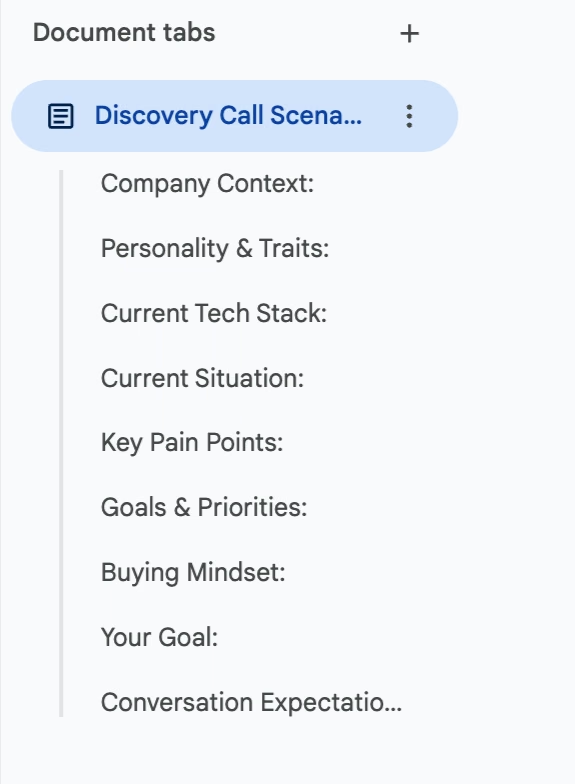

Step 2: Define Persona, Context and Objective

Inside the creation interface, define the buyer persona, company context and conversation objective. Instead of a short prompt, you structure role and responsibilities, business context and pressures, and desired outcome of the call.

What this controls

The realism of the conversation depends on how well the persona is defined. Weak inputs lead to cooperative simulations, while strong context introduces constraints such as budget pressure, stakeholder alignment and skepticism.

Step 3: Add Detailed Scenario Context

At this stage, you define the full scenario that governs how the agent behaves. This replaces shallow prompts with structured context that drives realistic interactions.

Where most roleplays fall short

Generic prompts produce predictable conversations. Once reps recognize the pattern, they optimize for the simulation instead of improving actual discovery behavior.

Step 4: Generate and Run the Roleplay Simulation

Once configured, the agent simulates a full discovery conversation with dynamic behavior. The AI interrupts, challenges weak responses, adapts based on answers and maintains persona consistency.

.gif)

What this introduces

Variability. Real discovery calls rarely follow a clean flow, and this step forces reps to respond to unexpected inputs instead of relying on memorized sequences.

Step 5: Practice in a Realistic Discovery Environment

Each agent behaves like a real buyer with defined goals, constraints and context. Reps engage in a complete discovery conversation aligned to actual deal conditions.

Where this shows up in real calls

Execution gaps. Reps who understand frameworks still struggle when conversations become unstructured, and this step closes that gap by aligning practice with real conditions.

Step 6: Observe a Full Roleplay Conversation Before Evaluation

Before scoring is applied, reps can review or experience the full interaction, capturing how they handled questioning, positioning and pacing.

What this reveals

How decisions are made during the call. Without this, feedback focuses on outcomes instead of the reasoning and flow that led to those outcomes.

Step 7: Evaluate with Discovery Scorecard

Each session is evaluated using a structured scorecard aligned to discovery best practices, including opening quality, question depth, pain identification, value articulation and objection handling.

What this standardizes

Coaching. Consistent evaluation ensures that feedback is aligned across managers and sessions, making improvement measurable instead of subjective.

Step 8: Analyze Conversation Metrics and Behavioral Signals

Beyond scoring, detailed analytics provide insight into how the conversation was conducted, including talk ratio, question rate, interaction depth and conversation pacing.

What becomes visible here

Behavioral patterns. Metrics like talk ratio and question density highlight issues that are often missed in qualitative reviews.

Step 9: Fine-Tune the Agent Without Rebuilding the Scenario

Instead of manually editing multiple fields, the agent can be refined through direct interaction. Teams can adjust persona tone, modify objections, change difficulty level and update scenario dynamics.

Why this matters in practice

Speed of iteration. Updating scenarios through structured fields is slow, while conversational fine-tuning allows teams to quickly adapt agents as products, markets and buyer behavior change.

Why Single-Persona Sales Roleplay Fails in SaaS Discovery

Most roleplay systems and generic AI tools simulate linear conversations where the buyer behaves consistently throughout the interaction, creating a controlled but unrealistic environment.

In real discovery calls, especially in mid-market and enterprise deals, multiple stakeholders are often present or enter the process early. Each stakeholder evaluates the problem differently: technical stakeholders focus on feasibility and integration, finance evaluates cost, budget ownership and ROI, and business leaders prioritize speed, outcomes and operational impact.

These perspectives rarely align by default, which forces the rep to navigate conflicting inputs while maintaining a coherent narrative. When roleplay does not simulate this complexity, reps are not prepared for the moments where discovery breaks down.

Multi-Persona Roleplay for Enterprise SaaS Discovery Calls

Multi-persona sales roleplay introduces multiple stakeholders into the same simulation, allowing reps to practice managing real buying group dynamics within a controlled environment.

For example, in enterprise discovery calls, it is common to have two or three stakeholders present, especially in procurement-led evaluations where finance and IT are already involved. Procurement focuses on vendor consolidation, negotiation leverage and cost control. Finance evaluates budget ownership, approval thresholds and return on investment. IT raises concerns around integration, security and implementation effort.

These inputs do not arrive in a clean sequence. Stakeholders may interrupt, ask parallel questions or redirect the conversation based on their priorities.

The rep must maintain clarity while addressing multiple concerns, reframe value differently for each stakeholder and prevent the conversation from fragmenting across competing priorities. This is not a linear discovery process, but an active alignment exercise.

Multi-persona roleplay trains this capability by exposing reps to overlapping signals and requiring them to adapt in real time, which reflects how enterprise discovery calls actually operate where decisions are distributed rather than centralized.

Why ChatGPT and Generic AI Roleplay Tools Fall Short

While tools like ChatGPT or Claude can simulate discovery conversations, they are not designed for multi-stakeholder discovery call roleplay.

They operate within a single conversational thread and cannot maintain multiple stakeholder perspectives simultaneously, introduce dynamic shifts in authority or priorities, reflect internal disagreements between stakeholders or evaluate how effectively the rep manages alignment.

Even with detailed prompts, the interaction remains sequential, which limits the ability to simulate real buying environments where conversations evolve across multiple participants.

This makes generic tools useful for basic practice, but insufficient for preparing reps for enterprise discovery complexity.

How to Measure Sales Discovery Call Performance Over Time

Most roleplay tools evaluate performance at a session level, which provides a snapshot but does not capture how reps improve across multiple interactions.

A structured system requires longitudinal measurement, where performance is tracked across multiple roleplay sessions, live discovery calls and different deal scenarios.

Key indicators include progression in discovery depth, consistency in quantifying business impact, accuracy in stakeholder mapping and ability to move from conversation to defined next steps.

Tracking these over time provides a clearer view of capability development rather than one-off performance.

Connecting Roleplay Scores with Real Discovery Call Performance

The most critical capability in AI sales coaching platforms is the ability to connect practice performance with real call execution.

Roleplay sessions should align with post-call scorecards from actual discovery conversations, creating a unified evaluation framework.

This allows teams to compare roleplay performance with live execution, identify where skills break under real conditions and track improvement across both environments.

By aligning scoring across practice and execution, these gaps become visible and can be addressed systematically.

You can see how this approach is applied in practice here: Case Study

Closed-Loop Coaching for SaaS Discovery Calls and Roleplay

A closed-loop coaching system connects discovery call roleplay, live execution and performance feedback into a single measurable process, ensuring that training is not isolated from real deal outcomes but directly tied to pipeline quality and conversion.

Most teams already run roleplays and review calls, but without a connected system, these activities remain fragmented, making it difficult to understand whether reps are actually improving in live discovery conversations.

The Closed-Loop Model for Discovery Improvement

A structured model follows four stages: practice, execute, validate and reinforce, where each stage feeds into the next and creates a continuous loop of improvement.

Practice prepares reps with realistic scenarios, execution captures behavior in live discovery calls, validation measures whether key skills are applied and reinforcement targets gaps through focused training.

Internal assessment across discovery calls shows that teams operating within this loop achieve higher consistency in discovery quality, because improvement is driven by real performance signals rather than isolated feedback.

AI Roleplay Engine for Realistic Discovery Call Practice

Effective AI roleplay for SaaS discovery calls must replicate how buyers behave in real situations, including interruptions, objections and shifting priorities.

Adaptive simulations allow reps to practice against different personas such as technical buyers, economic decision-makers or reluctant stakeholders, ensuring that conversations reflect actual deal conditions.

Roleplays generated from real calls, transcripts and playbooks make practice relevant, as reps engage with the same objections, language and scenarios they encounter in live conversations.

Unified AI Scoring Across Practice and Live Calls

One of the biggest challenges in sales coaching is inconsistent evaluation, where roleplay sessions and call reviews use different criteria, making it difficult to track improvement.

A unified scoring system evaluates both environments across the same dimensions, including discovery depth, objection handling, messaging accuracy, value articulation and talk track adherence.

This creates a consistent benchmark, allowing teams to compare performance across practice and execution and identify where skills break down.

Post-Call Validation and Skill Adoption

Training only creates value when it translates into behavior change in real calls, which requires validating whether skills practiced in roleplay appear during live discovery conversations.

Post-call analysis focuses on observable behaviors such as question depth, impact quantification and clarity of next steps, ensuring that coaching is based on execution rather than intent.

This connects training directly to pipeline outcomes, making it easier to understand what drives deal progression.

Personalized Micro-Roleplays and Behavioral Gap Detection

Generic coaching fails because it does not address individual performance gaps, leading to uneven capability across teams.

A closed-loop system identifies specific weaknesses such as poor stakeholder mapping, weak impact quantification or inconsistent closing, and generates targeted micro-roleplays to address those gaps.

These focused practice sessions allow reps to improve specific behaviors through repetition, leading to faster and more measurable progress.

Manager and Team Analytics for Discovery Performance

For sales leaders and enablement teams, visibility into discovery performance is critical for scaling improvement.

Aggregated analytics highlight patterns such as common discovery gaps, talk-to-listen ratios and areas where deals stall, enabling data-driven coaching and process optimization.

This allows leaders to move from anecdotal feedback to structured programs that improve discovery quality across the organization.

Limitations of Generic AI Tools for Discovery Roleplay

While tools like ChatGPT or Claude can simulate discovery conversations, they lack access to company-specific context, historical deal data and consistent scoring frameworks.

They do not track performance over time or connect practice outcomes to real pipeline metrics, which limits their ability to support structured coaching.

As a result, they are useful for basic practice but insufficient for building a scalable discovery coaching system.

Outdoo as Closed-Loop Discovery Coaching Infrastructure

Outdoo connects pre-call roleplay, live discovery conversations and post-call analysis into a unified system, enabling teams to measure whether training translates into real behavior change.

It allows one-click roleplay creation from real calls, transcripts and conversation intelligence platforms, ensuring that scenarios reflect actual buyer language and deal context.

Adaptive simulations support multi-persona discovery practice, while unified AI scoring provides consistent evaluation across both practice and live calls.

The platform identifies behavioral gaps and generates personalized micro-roleplays, ensuring that coaching is targeted and tied directly to observed performance.

A closed-loop coaching system shifts discovery from isolated practice to a continuous improvement model grounded in real data. Reps improve not only in simulations but in live calls, managers gain visibility into performance gaps and enablement teams can connect training directly to pipeline outcomes.

This turns discovery into a measurable and repeatable capability that improves conversation quality, accelerates deal progression and drives more predictable revenue outcomes.

Measuring Discovery Call Performance and Readiness

Most teams run discovery calls and roleplay exercises, but lack a clear system to measure whether reps are actually improving, which leads to inconsistent execution and unreliable pipeline signals.

Without defined metrics, discovery remains subjective, making it difficult for sales leaders, enablement teams and revenue operations to identify what good looks like and where gaps exist.

Core Metrics for SaaS Discovery Call Quality

Effective measurement starts with identifying a small set of observable signals that indicate whether a discovery call is progressing toward a qualified opportunity.

MetricWhat It IndicatesSignal of StrengthRisk IndicatorTalk-to-listen ratioConversation balanceBuyer speaks 50–60%Rep dominates conversationQuestion depthQuality of discoveryMoves from pain to impactStays at surface-levelImpact quantificationBusiness relevancePain tied to revenue, cost or timeNo measurable consequenceStakeholder coverageDeal completenessIdentifies decision roles earlyUnknown decision processNext step clarityDeal momentumSpecific meeting scheduledVague follow-up

These metrics are not isolated; they compound, meaning weak performance in one area often affects others, such as poor questioning leading to weak impact articulation and unclear next steps.

What High-Performing Discovery Calls Look Like

High-performing calls share consistent patterns that can be measured and replicated across teams.

Reps maintain balanced conversations, ask structured questions that uncover both pain and impact, and connect those insights to clear business outcomes.

They identify decision-makers early, understand approval processes and align next steps with the buyer's timeline, ensuring that the deal moves forward with clarity.

In our internal analysis, calls that meet these criteria show significantly higher conversion to second meetings and shorter sales cycles, because the problem is clearly defined and owned.

Common Failure Patterns in Discovery Calls

Poor discovery is rarely random; it follows predictable patterns that can be identified and corrected.

Reps often ask too many situational questions without progressing to impact, leading to conversations that gather information but fail to create urgency.

Another common pattern is over-talking, where reps explain the product too early instead of diagnosing the problem, which reduces buyer engagement.

Deals also stall when stakeholder mapping is incomplete, as unseen decision-makers introduce objections later in the cycle.

These patterns highlight that the issue is not lack of activity, but lack of depth and structure in how discovery is executed.

Measuring Readiness Before Live Calls

Readiness is not about completing training, but about demonstrating the ability to execute discovery frameworks under realistic conditions.

This requires evaluating roleplay sessions using the same criteria as live calls, ensuring that reps can consistently uncover pain, quantify impact and set clear next steps.

Teams that assess readiness through structured simulations see fewer breakdowns in live calls, because reps have already practiced handling interruptions, objections and complex buyer dynamics.

Linking Discovery Performance to Pipeline Outcomes

Measurement becomes valuable when it connects discovery behavior to business outcomes such as pipeline quality, deal progression and win rates.

For example, deals where impact is clearly quantified during discovery are more likely to progress to evaluation, while deals without defined ownership or urgency often stall.

By linking call-level metrics to pipeline data, revenue operations teams can identify which behaviors drive results and prioritize coaching accordingly.

This creates a feedback loop where discovery performance is continuously optimized based on actual deal outcomes.

Building a Discovery Scorecard System

A structured scorecard translates qualitative conversations into measurable data, enabling consistent evaluation across reps and teams.

An effective scorecard includes dimensions such as discovery depth, objection handling, messaging accuracy and next step clarity, with clear criteria for each level of performance.

Consistency in scoring is critical, as it allows comparisons across roleplay sessions and live calls, making it easier to track improvement over time.

Conclusion

Discovery improves when practice reflects real buying conditions and is measured against live execution, not simulated outcomes alone.

If you want to see how this works in practice, from multi-persona roleplay to unified scoring and continuous coaching, the next step is to experience it directly through a live demo tailored to your sales process and team structure.

Frequently Asked Questions

A SaaS discovery call roleplay is a structured practice exercise where sales reps simulate real discovery conversations with realistic buyer personas. Effective roleplay goes beyond scripted prompts by incorporating buyer context, stakeholder dynamics, objection patterns and measurable evaluation criteria to build skills that transfer to live calls.

The most effective SaaS discovery call frameworks follow a structured sequence of prepare, open, diagnose, demonstrate value, close and follow up. Within diagnosis, frameworks like MEDDPICC and SPICED help reps systematically uncover metrics, decision process, pain and impact. The key is combining structure with active listening.

Discovery call quality is measured through observable signals including talk-to-listen ratio, question depth, impact quantification, stakeholder coverage and next-step clarity. These metrics should remain consistent across both roleplay and live calls, and when tracked over time they reveal patterns in where reps improve or struggle.

Generic AI tools like ChatGPT operate within single conversational threads with no memory across sessions, no connection to real call data and no consistent scoring. They cannot simulate multi-stakeholder dynamics or track improvement over time, which limits their ability to build the judgment and adaptability needed for real discovery calls.

Closed-loop coaching connects practice, live execution, validation and reinforcement into a single system. Reps practice through roleplay, apply skills in real calls, get evaluated using unified scorecards and receive targeted micro-roleplays to address gaps. This creates continuous improvement tied to actual pipeline outcomes.

.svg)

.webp)